SecuLayer R&D Center Wins '2025 Korean Internet Information Society Best Paper Award' for Tool-Augmented LLM Research

The research team at SecuLayer R&D Center (Team Leader Kim Jin and 4 others: Moon Gi-jung, Lee Soo-bin, Kim Won-jun, Moon Il-joo) has received the Best Paper Award at the

「2025 Korean Internet Information Society Regular General Meeting and Fall Academic Presentation Conference」 for their paper titled

'Refining the Reasoning Process of Tool-Augmented LLMs for Context Optimization.'

This research proposes a key technology to solve the 'context accumulation problem' that arises when tool-augmented LLMs interact with external tools to perform complex and lengthy tasks.

The internal thought process generated by LLMs (Large Language Models) plays a crucial role in determining the next action, but as multi-turn conversations become longer, unnecessary information accumulates in the context, leading to performance degradation (Lost in the Middle) and increased computational costs.

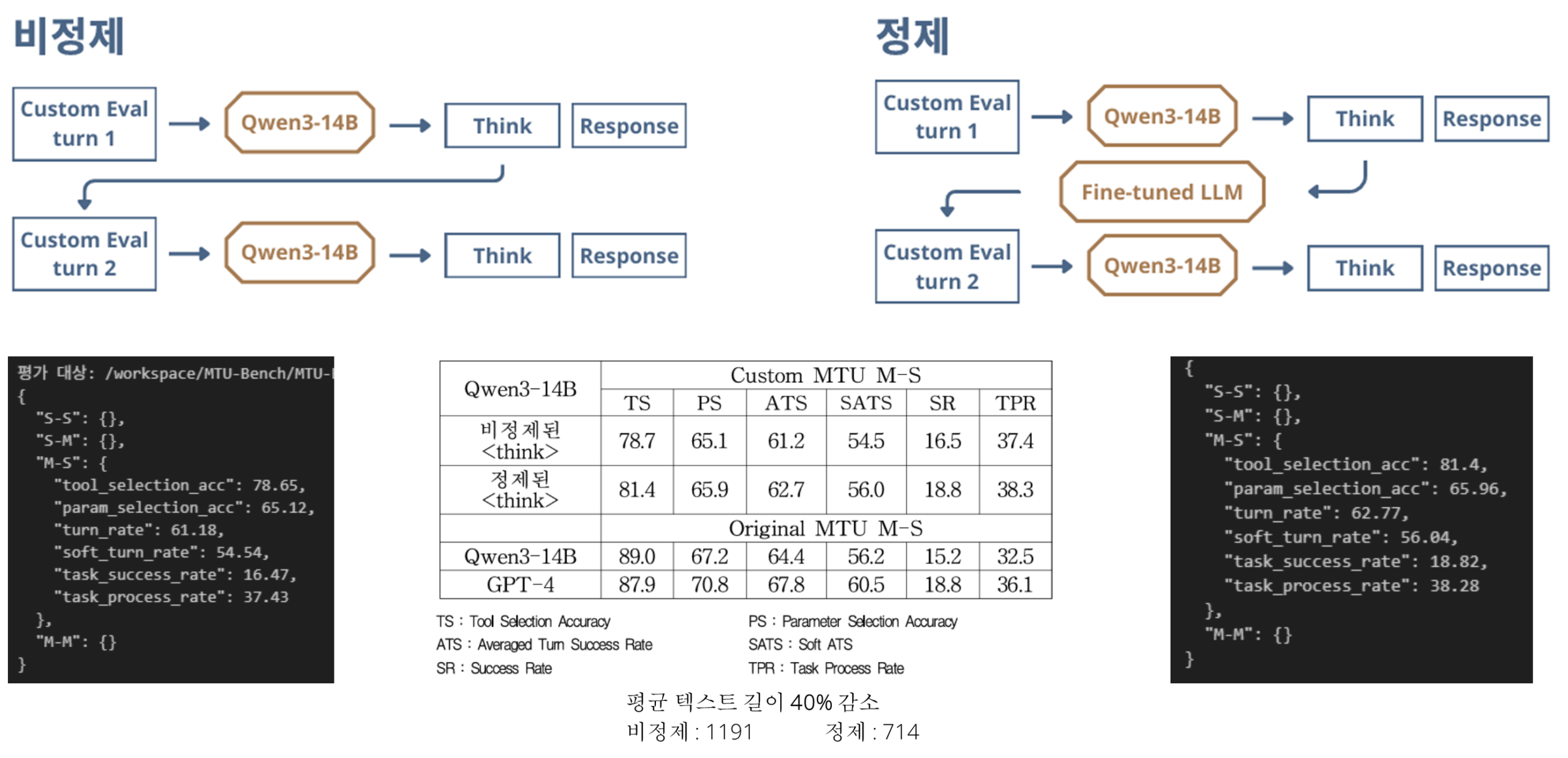

To address this inefficiency, the SecuLayer research team proposed a new methodology for selectively refining the thought process. The key points are as follows:

Thoughts related to executed tool calls: Identify only the thought processes used to trigger the relevant tool calls and summarize and refine them concisely.

Future-oriented thinking: Preserve information necessary for subsequent turns, such as planning for the next steps, and pass it along.

To train this selective refinement capability, the research team built a high-quality dataset based on relevance scores and applied a 'Guidance Loss' function that encourages the retention of core meanings to fine-tune the model.

The experimental results clearly demonstrated the effectiveness of the proposed methodology.

Improved efficiency: Achieved a reduction of approximately 40% in average context length.

(1191.36 -> 714.37)Increased accuracy: Significantly improved the success rate (Success Rate, SR), a key metric for measuring the accuracy of multi-turn tool calls.

(Unrefined 16.5 -> Refined 18.8% on Custom MTU M-S)

A representative from the SecuLayer R&D Center stated, "This technology is significant in that it lays the foundation for LLM-based agents to reliably perform longer and more complex tasks, and we aim to contribute to the advancement of LLM-based agent technology through follow-up research, including state maintenance evaluation and adaptive refinement mechanism development."

We appreciate your continued support for SecuLayer as we lead the convergence of LLM and AI security technologies~!

Thank you.

![[Event Sketch] SecuLayer Introduces the eGISEC 2026 Participation Site!](/_next/image?url=https%3A%2F%2Fd1dvcjcxccygto.cloudfront.net%2Fuploads%2Fmig_1775801783179_371718426.jpg&w=3840&q=75)

![[Event Sketch] SecuLayer Introduces the ISEC 2025 Participation with SK Shieldus!](/_next/image?url=https%3A%2F%2Fd1dvcjcxccygto.cloudfront.net%2Fuploads%2Fmig_1775801794346_823422449.jpg&w=3840&q=75)